When I discuss Augmented Reality projects with people, I often realize that this concept is not clear to everybody. So, let’s talk a bit about Augmented Reality!

Human beings have been fantasizing about mixing virtual worlds into the real world for decades. In 1951, Bradbury published The Veldt [Bradbury 1951]. In this short story he imagines a nursery room where children can go and visit faraway places, learning about the world without leaving the house. As promising as this sounds, Bradbury also plays with our anxiety of a fading frontier between reality and virtuality: what is real? and what is not? Questions that suddenly become much more relevant when your children are facing lions in the savanna...

The Veldt – Ray Bradbury

Later on, in the book “Do Androids Dream of Electric Sheep?” (that maybe you know under the name “Blade Runner”) [Dick 1968], we discover the empathy box. The empathy box is a system that creates for you a virtual world where you can feel and live the experiences of another person from their perspective. This follows up on an important question of the book: what is empathy?

Do Androids Dream of Electric Sheep? – Philip K. Dick

So, what can happen if we mix the real and the virtual? Will the real be eaten up by the virtual? Will we achieve total empathy? Will it blend? Well, let’s see!

Before going into technical details, let’s look at a few examples.

Pokemon Go - Niantic

Augmented Creativity - Disney Research

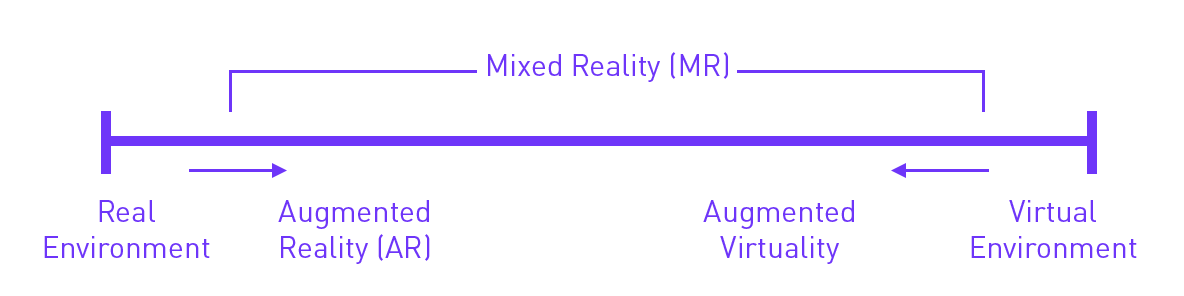

In 1995, Milgram and Kishino defined a continuum between reality and so-called virtuality [Milgram 1995]. I quite like this idea of a continuum, with no clear cut between real and virtual. What is interesting to note here, is that Augmented Reality is positioned just after the real environment: that is, Augmented Reality only adds virtual content onto the real world.

Reality-Virtuality Continuum – Reproduced from [Milgram 1995][1]

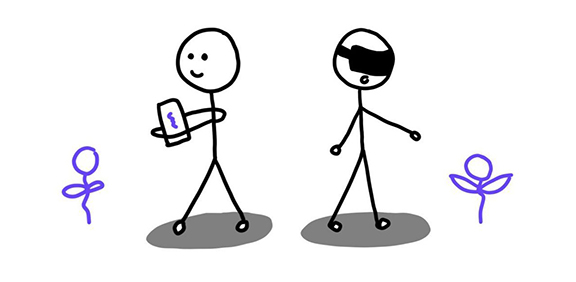

And here lies the major distinction with Virtual Reality: in Virtual Reality, the real world is occluded and replaced by the virtual world. In Augmented Reality, the real world is still visible, and just augmented (huhu) with virtual content.

Augmented Reality vs Virtual Reality

Another definition of Augmented Reality that I like is the one proposed by Azuma in 1997 [Azuma 1997]. Azuma says that a system can be described as Augmented Reality if:

-

It combines real and virtual – we saw that already, it keeps it real

-

It is interactive in real time – so, ideally, you can seamlessly interact with the real and the virtual content without breaking the magic

-

It is registered in 3 dimensions – this means that the system has some understanding of the position of the virtual elements in the real environment

Let’s look at an example again!

Behind the Art - ETH Game Technology Center

Here, you can see how Augmented Reality is used to bring information and interactivity to real-life paintings. Augmented Reality brings in interesting interaction: for example, when you go closer to a specific part of a painting, you get information about that bit you are interested in. That means you can explore the painting at your own pace and in an order that makes sense to you.

Ah, and one more thing before moving to discussion: this mobile-based thing is not the only way to do Augmented Reality [Van Krevelen 2007]!

See-through vs Spatial Augmented Reality

So far, I only showed see-through Augmented Reality. “See-through” means that you see the real world through a display that is then used to overlay virtual content on top. This is the Pokemon Go example, where you see the content on the screen of your phone.

But there is also spatial Augmented Reality. In this case, the virtual content is projected directly onto the real world. In the following example, “Flippapper”, you draw your pinball level on paper, in the real world, and it is augmented with projection to be turned into a playable game. Spatial Augmented Reality is nice because you don’t have to carry a device around. But as it is using projectors, it is very sensitive to lighting conditions.

Flippaper - Jérémie Cortial & Roman Miletitch

> the extra bit_

But how does this work? For most of my human readers, when you look around, you are able to identify what you see: this is a table, this is a cat, this is another person that happens to be wearing a dress. If I would give you a picture of a table and ask you to draw something on the table, you’d be able to do it reasonably correctly.

For computers, it’s a bit more difficult. The computer sees pixels, and has little understanding of the content of the image. If you want your computer to display something on a table, you need to teach it how to do this.

To do Augmented Reality, we need the computer to be able to do the following:

· Identify where in the real world it should add virtual content

· Add the virtual content

· Move the virtual content appropriately when we move around the real world

This is called tracking There are various ways of doing this. I will only present one here, just to give you a bit of an idea, but you can find links at the end of the article to go a bit further if you are curious.

Let’s look into the case of tracking using image targets. This is when we are using a specific image (called “target”) in the real world to add content on top of it. For example, in the museum example earlier, the image targets are the paintings: this is what is recognized by the computer to position the augmentation.

Ok, let’s check this out now!

Let’s say we want to make a music game: you are in a room with different pictures of instruments, and, as a music conductor, you can point at them with your phone to make them play.

First, you would need to define an image target: this is an image that makes sense for your application, but that is also easily identifiable by the computer. For example, for my game, I would like a picture of drums, piano, and violin. I’m going to use the amazing art of Aquasixio for the sake of this example[2].

Rage, FiddleBack, Le Pianoquarium - Aquasixio

How does the computer recognize my picture now? Well, it’s using so-called “features”. These are areas that are highly specific to the picture: they should help the computer identify the picture and its orientation. When we will then film something with our phone, it will constantly look for those features in the video feed to see if the image target is here and if it should display the augmentation or not. For example, I used Vuforia, a widely used augmented reality development kit, to identify the features of my “drums” image target:

The features of the drums image target

See how the features are sticking on pointy things and strong edges? This is because these areas are easily identifiable by the computer, no matter the orientation of the image. Interestingly enough, you can also see that Vuforia uses a greyscale version of the image. This is because colors don’t help to identify a picture much: if we would use colors to identify an image, it would stop working as soon as you are in a room with a more funky lighting, because the colors perceived by the phone would be completely different. At this point, all that is left to do is to add and move the virtual content.

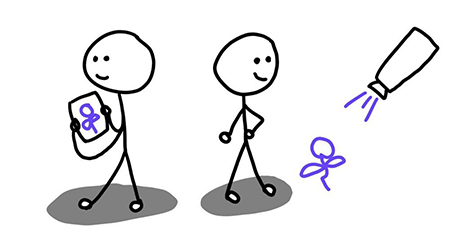

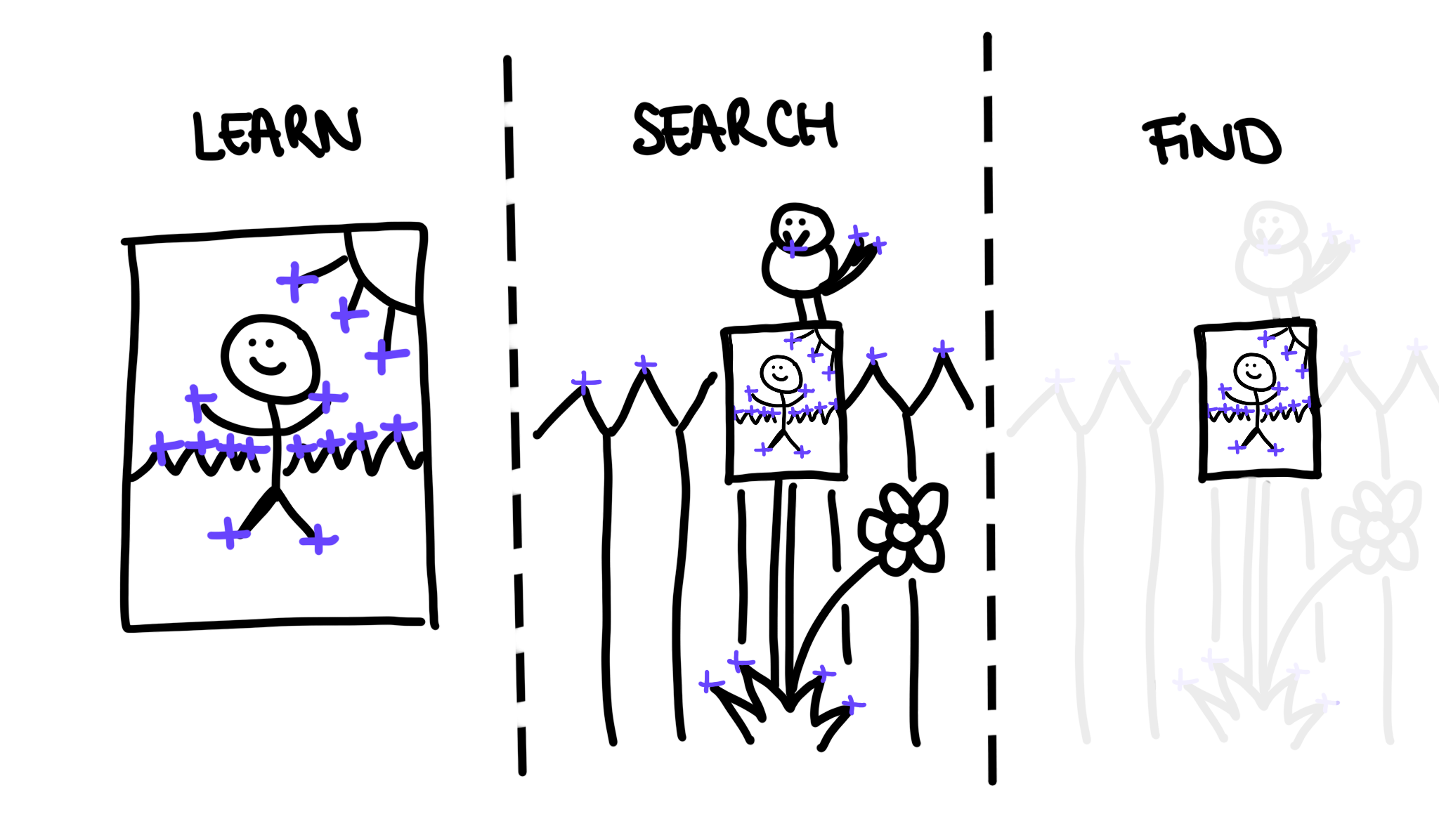

Now we are outside, pointing excitedly at things with our phone, hoping for some magic to happen! As we move around, the phone will analyse the video feed, trying desperately to find the image we taught it to recognise. To do so, it will try to find the features it remembers from the picture. Once it did that, it will look for an area where there are many of the features, and where they are arranged similarly to how they were on the picture. If there are many similarities with the set of features of the picture, the phone will proudly announce that it found the picture.

1. The phone learns the features of the image target. 2. It searches for all the features looking like the ones of the image in the outside world. 3. It finds the area where the arrangement of features looks suspiciously like the one on the original picture.

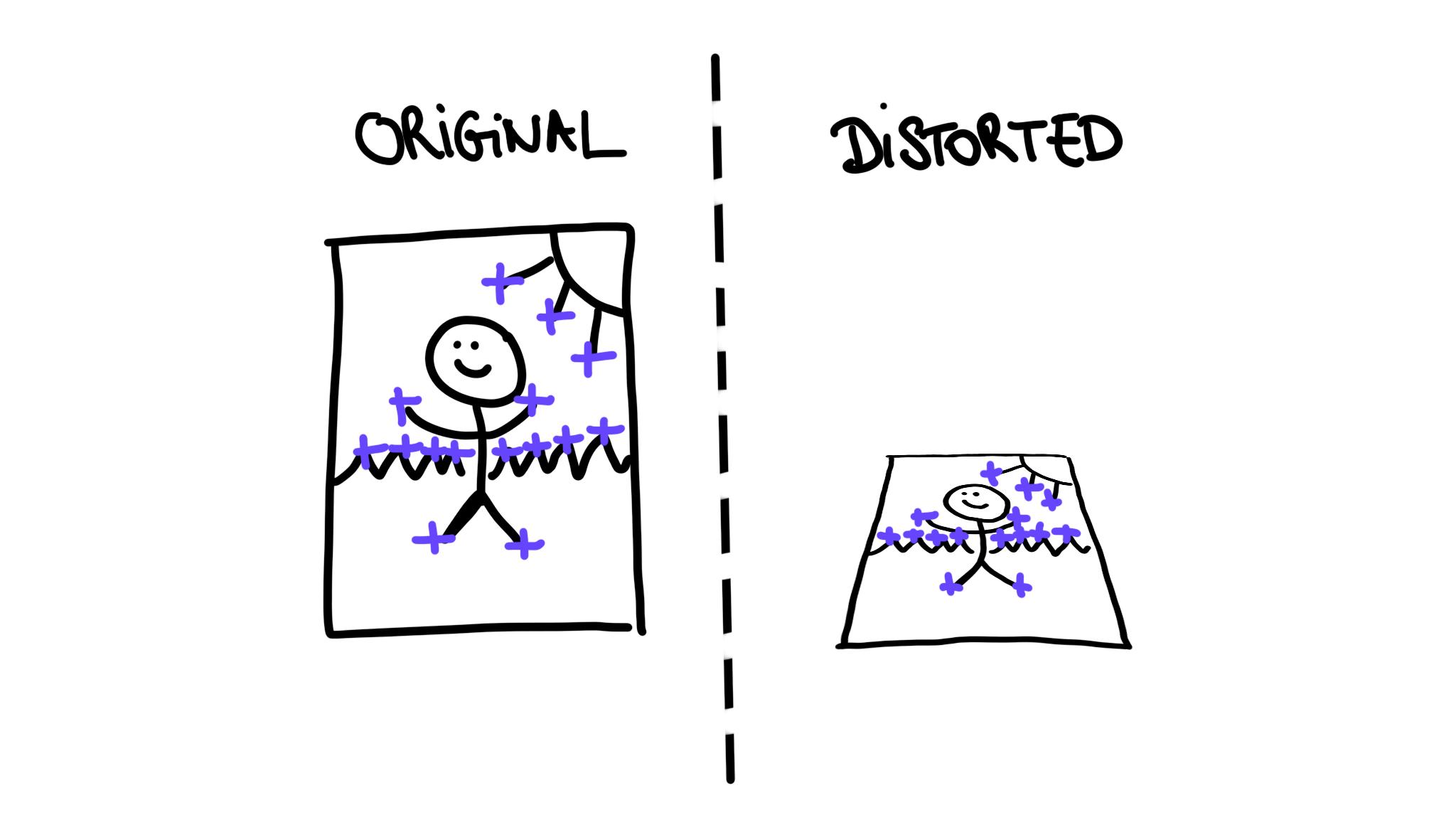

Now the last thing! We need to position the augmentation on the picture, and with the same orientation. In particular, if the image is rotated, the augmentation should be rotated accordingly (to power up this seamless magic we mentioned earlier). Because we already found the features, this is not too complicated. We just need to compute how the set of features is distorted: for example, if the image is facing us, the set will not be distorted at all, as this is the position we used to teach the phone. But if the picture is facing upwards, the set will be strongly contracted in one direction. We can express this distortion using The Maths™, and then simply use this to position and orient the augmentation. If we're brave, i might go deeper into that in follow-up articles or tutorials!

The phone learnt the feature arrangement from the original image. When the picture is oriented differently, the set of features appears distorted to the phone. This distortion indicates where and in which direction to add the augmentation.

> the extra bit_over_

Ok, so, long story short: with Augmented Reality, we can add virtual content into the real world. That can be particularly amazing for education. First, you are manipulating tangible physical objects in the real world, grounding the activity into reality. Second, because of the virtual aspect, you can personalize the experience for the user: that means you can check how the learner is doing, and push them (more or less) gently when they need it. Finally, Augmented Reality activities support collaboration much better than computer-based activities [Billinghurst 2002]. In general, Augmented Reality is presented as a psychologically safe space to learn through failure [Koutromanos 2015]. All these features are extremely important for learning, as we will see in other articles.

It’s not all that easy though. Actually, many players of Pokemon Go deactivated the Augmented Reality feature because they found it annoying in the long run as it was more gimmicky than actually useful for the game [Alha 2019]. Similarly, most of the Augmented Reality educational apps available online don’t really make use of Augmented Reality: it’s a fun feature, but not at all exploited for educational purposes. Ideally, teachers should be much more involved in the process of developing Augmented Reality activities, but it’s a lot of effort and it requires specific expertise. Moreover, the result is usually only one specific activity covering only a small percentage of the educational curriculum. But some people are working on that, so let’s keep in touch and see what the future holds for us!

xoxo,

The Diverter

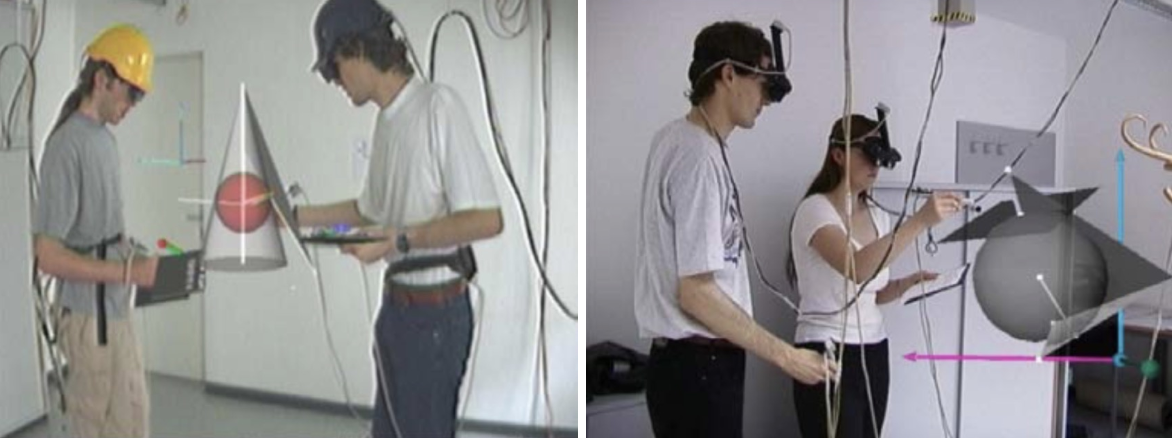

Bonus picture: some of the first Augmented Reality prototypes for mathematics [Kaufmann 2002]. Still make me laugh, for phallic reasons.

To go further:

If you are curious and want to start doing AR on your own, check out these links!

Notes:

[1] Some of you might have already heard about Mixed Reality in other contexts as well, describing products like HoloLens. As for me, I never found a definition of Mixed Reality that convinced me, so I won’t talk much about it here. If you are curious about the state of the debate, you can skim through this paper: “What is Mixed Reality?” [Speicher 2019].

[2] If you intend to make commercial applications, make sure that you have all the rights necessary to use the images.

References:

[Alha 2019] K. Alha, E. Koskinen, J. Paavilainen, and J. Hamari. Why do people play location-based augmented reality games: A study on Pokémon GO. 2019.

[Azuma 1997] R. T. Azuma. A survey of augmented reality. Presence: Teleoperators & Virtual Environments. 1997.

[Billinghurst 2002] M. Billinghurst and H. Kato. Collaborative augmented reality. 2002.

[Bradbury 1951] R. Bradbury. The Veldt. 1951.

[Dick 1968] P. K. Dick. Do Androids Dream of Electric Sheep?. 1968.

[Kaufmann 2002] H. Kaufmann, & D. Schmalstieg. Mathematics and geometry education with collaborative augmented reality. 2002.

[Koutromanos 2015] G. Koutromanos, A. Sofos, L. Avraamidou. The use of Augmented Reality Games in Education: a review of the literature. 2015.

[Milgram 1995] P. Milgram, H. Takemura, A. Utsumi, and F. Kishino. Augmented reality: A class of displays on the reality-virtuality continuum. 1995.

[Speicher 2019] M. Speicher, B. D. Hall, & M. Nebeling. What is Mixed Reality?. 2019.

[Van Krevelen 2007] R. Van Krevelen. Augmented reality: Technologies, applications, and limitations. 2007.

Due to some technical error, some comments were lost in the void. Here is a screenshot of some of them.